How to Conduct a DIY SEO Audit for Your Website: Backlinks and More

How to Conduct a DIY SEO Audit for Your Website: Backlinks and More

Google clocks in over three and a half billion searches each day. It doesn't matter if you're a lawyer, doctor, or an artist — users search for related keywords and phrases millions of times a day. That alone is an amazing opportunity for businesses and brands to be seen, yet the vast majority of online sites average zero visitors per day.

That's because they aren't optimized for search engines, so Google passes them over during searches. It isn't that difficult to optimize your website for search engine optimization (SEO), and frankly, if you don't know how, you should learn.

If businesses want to be found online, they need solid SEO. It helps build brands and sell products. To start on your SEO journey, you should first conduct an audit of your website and optimize it to the best of your ability — which is easy!

There are a number of steps you can take to make your website reach your target audience. This article will explain how you can audit your website for SEO and which tools can help.

Ask yourself: how is my page ranking?

First, we have to discuss search engine ranking, usually shortened to “search rank.” Search rank is your website's position on a search engine results page for a particular search. Put simply, if someone searches for your business or product, search rank is how close you are to being the first result.

The whole point of SEO best practices is to increase search ranking and get your website to the top of the search engine’s results.

Search rankings change from engine to engine; Google, Bing, Yahoo, and others use different algorithms to rank results. They also vary from search term to search term; a particular site may (and likely will) rank higher in some searches than in others. But across the board, it is true that the higher your site's ranking, the more traffic you'll get.

Internet users simply trust the first search results more, leading to more clicks. How high your website ranks depends on a number of factors, and we'll explain these factors so that your site can be better poised to take position zero –– the first spot on a search engine.

Our recommended tool

There are several major search analysis programs that explain your search rankings. Searchmetrics Suite is a popular choice. Google Analytics is also a must for all aspects of SEO management.

Make sure your website’s pages are “indexed”

The most basic factor affecting your search ranking is whether your site is indexed. If search engines do not have your site indexed, you don’t rank. Period.

Google, for example, uses a website crawler called GoogleBot to visit every website it can. This bot determines whether websites are legitimate and safe, then stores them in the Google index.

Being in the Google index means that your site appears in search results. Not being indexed means not showing up. It's that simple.

The simplest way to make sure your site (and each of its subpages) is indexed on Google is to search for it using the site: search operator.

You might type “homehypemonkey.com” to see how many of homehypemonkey.com's subpages are indexed by Google. Google finds about 700 results. If, for example, the site actually has 800 pages, it's clear that Google has not indexed 100 of them.

Recommended tool

Use Google Search Console or a website crawler, like Ahrefs Site Audit or Screaming Frog SEO Spider (discussed more later). They'll give detailed accounts of any non-indexed sites and subpages, with reasons why and how to fix them.

Does your website restrict access to certain pages?

One of the most common reasons your site might be non-indexed by Google is because you are accidentally telling Google not to index you.

This happens more often than you might think. Your site developer might restrict access to your site during construction and forget to lift the restrictions once construction is done. This is easily checkable by using the robots.txt file, which is a set of rules your site sets for website crawlers.

You can check the robots.txt file by searching your domain name and adding /robots.txt to the end. For example, suppose you search “homehypemonkey.com/robots.txt” and see three pages with the instruction "Disallow:". These pages will be “disallowed” from Google’s searches.

Recommended tool

Every web hosting service has its own interface for changing robots.txt files, but luckily, most are very simple. For example, Weebly allows users to edit robots files on a simple page known as "SEO Settings."

Review your website’s site map

Other important features of robots.txt files, and indexing in general, are XML sitemaps. Usually found at the bottom of robots.txt files, XML sitemaps are XML files containing links to your website's various pages.

If you think of your website as a house, website crawlers like GoogleBot use the sitemap as a set of rules for how to tour the house — what room to start in, where to go next, and which rooms are the most important.

Perhaps the most important purpose of the XML sitemap is to choose which sites will make the best landing pages for search results (i.e., your all-important first impression).

Recommended tool

For WordPress users, the Yoast SEO plugin will do the job. Squarespace, Wix, and the like all have their own systems (again, all very simple), and for those without a content management system, the free version of Screaming Frog SEO Spider works.

Check for multiple versions of the same website

You want users to only access one version of your website in their browsers.

Someone searching for your site (homehypemonkey.com, for example) could search for:

- homehypemonkey.com

- www.homehypemonkey.com

- http://www.homehypemonkey.com

- http://homehypemonkey.com

- https://www.homehypemonkey.com

- https://homehypemonkey.com

That's six different ways to go to the same place (essentially). Only one of these should actually be browsable; the rest should all redirect to the one browsable version.

Recommended tool

Creating .htaccess files is a common way to create redirects for your site. They are relatively easy to make in basic text-edit programs, and there are several guides that provide further instruction.

Make your website as secure as possible

In the section above, we listed six different ways to type in a URL. However, in these versions, there’s one small exception: http:// and https:// are different. The “s” in “https” stands for secure.

Sites beginning with https:// are secured with an SSL certificate, meaning the connection between the server and user is protected. This keeps the user's data safe from being tracked or stolen.

It's always recommended that sites switch to https://. Search traffic for SSL-secured sites is slightly higher, all other things being equal.

Recommended tool

Most web hosting services make activating SSL certificates as easy as clicking a button. Weebly, for example, has a simple on/off switch. For everyone else, letsencrypt.org has a mission to spread free SSL security across the web.

Assess how long it takes your page to load

When sites take too long to load (and this could mean the difference between three seconds and five seconds), users turn away. Google does not want to recommend sites that aren't useful.

There are many ways to freely and easily test site speed (see below). If tested and shown to be slow-loading, your page needs improvement. Common culprits of slow loads are:

- Adobe Flash media

- Bulky, inefficient file types, like large, uncompressed images

- Links to third-party media, like externally-hosted videos

Recommended tool

Google's PageSpeed Insights Tool is a natural first choice, but there is also GTMetrix and Pingdom, which are also very user-friendly.

Keep URLs short and sweet

URLs need to be simple and obvious. Both users and search engines read URLs to determine their content and usefulness. When creating an optimized URL:

- Keep them short

- Don't stack keywords together; less is more

- Avoid weird symbols and punctuation marks

- Separate words by hyphens, not spaces or underscores

- Structure all subpage URL names consistently

Recommended tool

Check out popular, authoritative websites' URLs and how they're written. Compare and use what works for you.

Optimize title tags and meta descriptions

When your website appears in search results, wherever it falls, wherever it is, it will (hopefully) have a title tag and meta description. The title tag is the clickable hyperlink to your website. The meta description is the text beneath the link that describes the site's contents.

What makes a good meta description?

Here’s an example of a solid title tag and meta description:

If these details aren't manually specified, they're auto-filled by Google and nearly always less impactful than they could be. For example, if not manually written, homehypemonkey.com might appear in Google search results with the link (aka the title tag) “homehypemonkey – home” or something similarly dull.

Likewise, its descriptive text (aka meta description) might be pulled from the first piece of text on the home page, which could read something like, “Why Home Hype Monkey? About Us Webinars Services Blog Testimonials Contact Us,” which is not likely to grab potential customers.

Instead, title tags should briefly describe the page and what it offers, like the real tag "Home Hype Monkey: Real Estate Digital Marketing." Short and sweet.

The meta description should be slightly longer but target industry keywords: "Top strategies for leasing student housing in your hometown."

Recommended tool

Same as above. There's no perfect tool to craft any title, copy, or content, but learning from successful examples is a great place to start.

You want clear H1s with relevant keywords

Return clicks matter just as much — if not more — than first-time clicks.

Once a user visits your webpage, they need to know who you are and what you do immediately. If your landing page is cryptic or confusing, the user is likely to leave in search of a more straightforward source.

The page should have a large, obvious, direct H1 header that includes your primary keyword(s). Any subsequent headers (H2s, H3s, etc.) should be smaller but also include keywords.

Don't forget: search engines search the contents of your page for keywords and phrases.

Include all images with “alt text”

Any time you upload an image to your website, you should include good alt text. Alt (short for alternative) text is a succinctly written description of an image, and it's used in two important ways.

First, if the image is ever unable to load, the alt text will appear on the screen in its place to provide context. Second, for those who have visual impairments, the text can be read in place of the image, allowing them to experience your site.

Crucially, this alt text is also read by GoogleBot and other search engine crawlers to help them contextualize and rank your site. Good alt text can improve your search ranking, while nonexistent alt text can harm it.

Determine what keywords users search for

Google searches aren't random; people search in trends. They tend to think of the same words and phrases when they search, and they also tend to use suggested searches most often.

Because of this, one of the biggest and most important components of SEO is actually keyword optimization. Using the following methods target keywords and phrases related to your industry:

- Site names

- Title tags

- Meta descriptions

- Headers

- Sub-headers

- Alt text

- Your website’s content

Many online tools analyze keywords and show trends, popular keywords, common associations, and which sites use keywords. We highly recommend familiarizing yourself with the keywords in your industry to optimize your website’s ranking.

Recommended tool

Wordstream's Free Keyword Tool and Ahref's Site Explorer will both break down which phrases get clicks and where those clicks lead.

Fill in those “content gaps”

Content gaps are keywords in which competitors rank higher than your site or those you don't rank for at all.

These missing keywords are the gaps in your keyword optimization, and they are therefore the biggest opportunities for enhancing your SEO through keywords. Keyword tools are, again, the go-to here. For example, Ahref's site explorer has a Competing Domains report showing the sites that most heavily overlap with yours in terms of keywords.

Super useful!

Then, plugging those sites into Ahref's Content Gap report will reveal which keywords those sites rank highly for and which keywords they don’t, and vice-versa.

It's difficult to overstate how crucial it is to maximize your keyword targeting, especially concerning your closest and most successful competitors. If they see more traffic based on simple phrases, you must identify these gaps and level the playing field.

Recommended tool

Same as above. Wordstream's Free Keyword Tool, Ahref's Site Explorer, and any number of other keyword tools.

Avoid duplicate content

We've stressed how important it is to target as many major keywords in your field as possible. Now, we have to caution against doing so in the wrong way.

Duplicate content (closely-worded or repeated text) across multiple pages kills search rankings. If Google discovers multiple instances of the same text across multiple subpages on your site, it will lower your ranking.

Even worse, if the text on your site closely matches the text on a completely different site, Google is less likely to think you're useful and more likely to think you're spam.

A great rule of thumb is to avoid duplicate content –– whether unintentional or plagiarized. Keywords are great, key phrases are good, but key sentences and key paragraphs don't exist.

If you're ever copying a full paragraph or even a full sentence word for word, go back and rewrite it. It doesn't just hurt your ethical standards — it hurts your search ranking, too.

Recommended tool

Copyscape is a great (and free to begin) website to check for duplicate content.

Avoid thin or sparse content

We mentioned before that overly bulky pages tend to load slowly, hurting users’ experience and your search ranking. Well, pages that are too small hurt both, as well.

Low content pages, also called low word count pages and thin content pages, have relatively little text and/or other media. This makes them less useful for users, and search engines know it.

Search engines will rank some pages with thin content lower. There is no hard-and-fast SEO rule as to what “too thin” or “too thick” is. Yet, when posting content, ask yourself: does this page answer the user’s question, or will the user go back to their initial search?

Recommended tool

There are sites like wordcounter.net that allow you to quickly view other pages’ word counts. The crawlers mentioned above, like Screaming Frog SEO Spider, have low word count and low content metrics as part of their reports, too.

Fix broken links

Broken links (also called “dead links”) are links that are no longer active. They can happen for any number of reasons. But whatever the reason, they always result in lower search rankings and a worse user experience — since users can't access certain information.

As GoogleBot and its peers attempt to navigate your site, if they encounter links they cannot traverse, their journey in that direction stops, meaning that they could miss huge chunks of content. A dead link from your home page to a secondary page containing dozens of other links will be missed by search engines (unless linked in another place).

Fixing dead links can mean either replacing the dead content or simply redirecting users from the dead page to a live one.

Recommended tool

Again, crawlers will attempt to navigate every internal link on your site and can easily detect broken links. Most will give detailed reasons why the link is broken, giving you the ability to fix them.

CSM's have their own checkers; for example, WordPress users have a simple free plugin appropriately named Broken Link Checker. Chrome itself has its own plugin, also named Broken Link Checker.

Ensure that your website is mobile-friendly

Internet users seldom use home computers. They're not even just on laptops. They now have tablets and mobile phones with multiple operating systems and resolutions. Thus, your website needs to be mobile-friendly.

Google uses mobile compatibility as part of its search ranking metric, meaning mobile issues will lower your chances of being seen. On top of that, if users attempt to access your site on their phones and tablets and find it difficult, they will return to their initial search.

Recommended tool

Google has an easy tool at https://search.google.com/test/mobile-friendly that allows you to check sites for mobile compatibility.

Check for hacked or spammed pages

Hacked pages are a relatively rare problem, but they can destroy your search ranking. Say, for example, you check to see if your site and all of its subpages are indexed as we recommended and your site actually has more pages indexed than it should. A quick check on those pages' content will likely show that someone hacked your site.

Using the site operator when searching (as we mentioned in the indexing section) will show how many sites are indexed and let you see their titles and meta descriptions. If those texts describe any pages that aren't yours—especially pages related to adult content, cheap prescription drugs, MLM organizations, and the like—it's time to re-secure and clean up your site.

Recommended tool

There are a lot of services that help secure sites to prevent hacking, as well as those that help “clean” sites that have already been hacked. Sucuri.net is a good example of a site known for both services.

Use external links and backlinks to build your website’s authority

Google chooses which pages to display first based mainly on how relevant and authoritative they are. Relevance we've covered. It comes mainly from keywords and how closely your site matches search terms.

Authoritativeness, however, comes mainly from the number of links to your site from other pages and the quality of those pages. Essentially, it's looking to see if your webpage runs with the "right crowd."

No tool will automatically build external links for you; it's up to you to actively encourage other websites to link to yours. You can do this by simply releasing noteworthy content, reaching out to other sites directly, and (as is often the case) purchasing links in the form of ads and/or sponsored content.

Avoid “black hat” SEO tricks

There are no SEO shortcuts. It takes time, due diligence, and an understanding of users’ intent to create an optimized website. For instance, suppose you take your website, go on a forum (like Reddit), and post the link dozens of times to various threads.

Once Google finds out (and it will), it may penalize your website for the infraction. If not addressed, it may remove your website from its index altogether, preventing anyone from accessing your content.

Recommended tool

Yourself. Person-to-person (even through email) contacts are the best way to build backlinks for newer and smaller businesses. Hiring professional services like Smith.ai can help build the connections necessary to generate a solid backlink profile.

Use website crawlers to identify possible problems

We've mentioned SEO software (like website crawlers) a few times, and with good reason. This software allows you to view how search engines index your site and identify certain issues. Importantly, many are completely free and identify most (if not all of the problems) we've covered.

A good auditor/crawler program is like an all-inclusive starter package for SEO and should not be overlooked.

Recommended tool

Screaming Frog SEO Spider is a free and thorough crawler. Beam Us Up is another. There's also Octoparse, HTTrack, ParseHub, and dozens of others. Like Ahrefs and Moz Pro, some cost money, but the expanded features are worth it.

Regularly audit your website’s SEO

Google's search engine metric constantly changes, as does every other search engine's metrics. The relative weight they put on relevance and authoritativeness is constantly shifting, and the weight they put on things like mobile-friendly factors and other emerging technology is constantly increasing.

A site with great SEO today could be a lagging disaster tomorrow, not just because of search metrics. Webmasters change their guidelines, and your tools and plugins could stop working. External links may no longer load. And, perhaps most importantly, content becomes outdated.

So what can you do? Your website isn’t a one-time fix-it-and-forget-it deal. You constantly need to update your website and monitor its traffic to increase leads.

Recommended tool

Your website is constantly scanned by various auditors/crawlers as well as the industry standard, Google Analytics. Together, with frequent auditing and basic working knowledge of SEO, your site is virtually guaranteed to see an improvement in traffic.

You can seek professional help with your website’s SEO

Now that you know how to complete a DIY SEO audit, there’s one last important thing to know: professionals can help.

We mentioned that backlinks are possibly the single most important aspect of SEO. Given that, professional help managing connections and communication can be a huge boon for growing businesses whose time and resources can be better spent elsewhere. It can also help larger businesses, which can save manpower by outsourcing connection generation to outside pros.

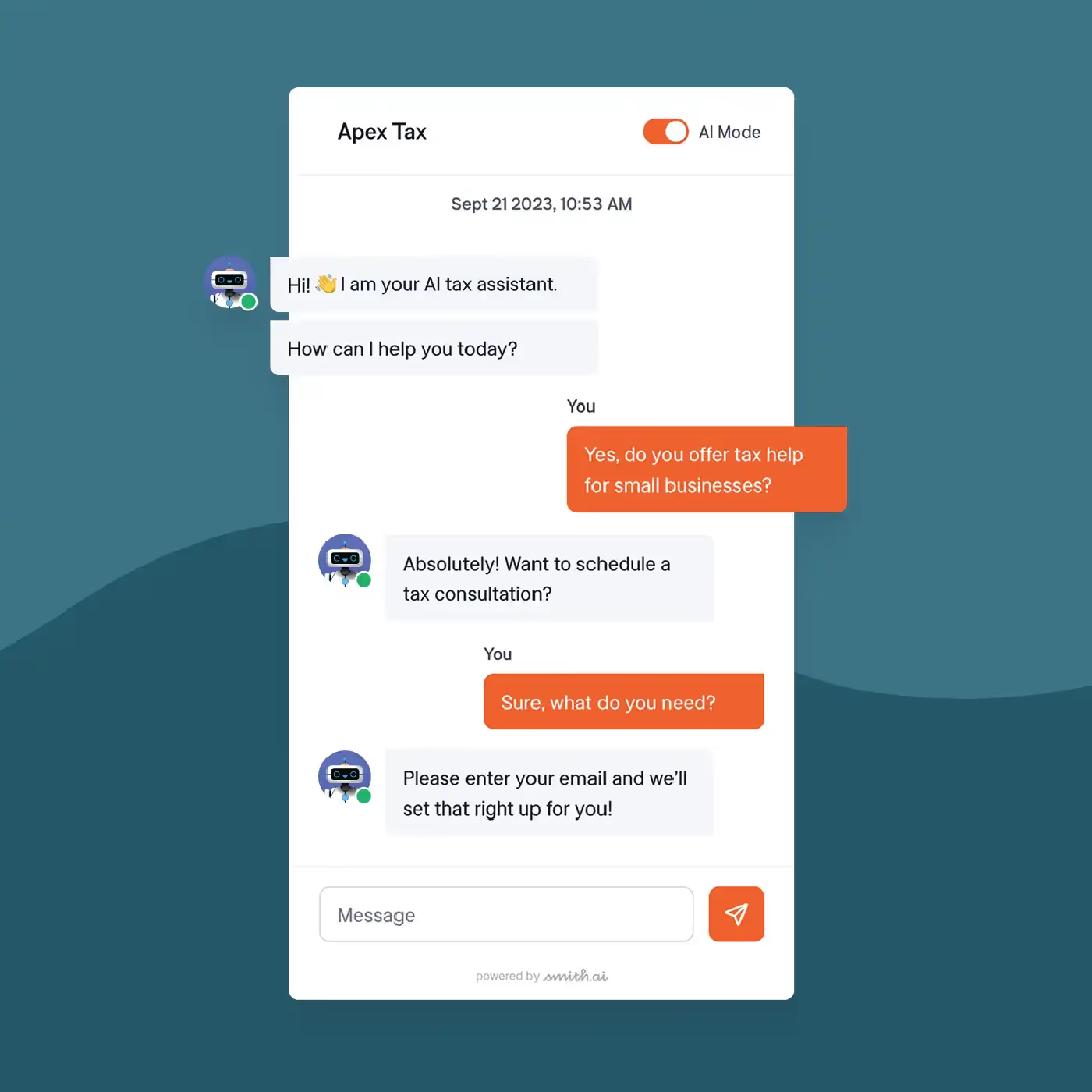

A company like Smith.ai — with its 24/7 live agents that answer calls, texts, website chat services, and even Facebook messenger — can be a huge asset in both customer satisfaction and connection building.

Consistently, prompt responsiveness is key to running a successful business. Smith.ai will help with response time, convert leads into clients, and promote solid backlinks. All of these things can enhance your website’s traffic.

If you want to improve visibility and connections, communication professionals can help.

Recommended tool

Smith.ai, obviously!

Connect with Smith.ai to explore your options

Remember, audit your site thoroughly and often to rank high and be seen. Look for gaps in content, replace broken links, optimize your keywords and tags, and speed up that website!

When you want to increase your business’s response times, call on Smith.ai to get the job done. You can book a free, 30-minute consultation with our team by making an appointment on Calendy. Here, you can learn about our services, experience, and pricing options.

Take the faster path to growth. Get Smith.ai today.

Key Areas to Explore

Your submission has been received!

.avif)

%20(1)%20(1).avif)

.svg)