The law firm's framework for evaluating AI receptionists

The law firm's framework for evaluating AI receptionists

You're reading Part III of The Complete Guide to AI Receptionists.

In Part I, we broke down the AI call handling landscape: three tiers of solutions, the role of humans, and the iceberg problem that makes vendor evaluation so difficult. In Part II, you identified your pain points, ranked your jobs to be done, and calculated what missed calls are costing your firm.

Now it's time to start recruiting. You've defined the role. This post is about preparing for interviews.

Map your systems first

Before you evaluate any vendor, document the infrastructure your AI receptionist will need to connect with. This step ensures your evaluation is grounded in your actual operational needs, not generic demos.

For each system, note what you have today and define the level of integration required. “We integrate with that” is the starting point — not the final answer.

Your phone system — Know your current provider and whether you own your number. Some vendors require full number porting; others work with call forwarding. This affects setup time, cost, and how disruptive implementation will be.

Your legal practice management system (LPMS) — Whether you use Clio, Filevine, Smokeball, or another platform, your receptionist solution will likely need to read from it, write to it, or both.

- Write to it: Determine whether basic lead creation and call logs are sufficient, or if you need detailed field mapping for meaningful CRM updates.

- Read from it: Booking consultations requires real-time access to availability. If additional data is needed during calls, define inputs, outputs, and where that data lives.

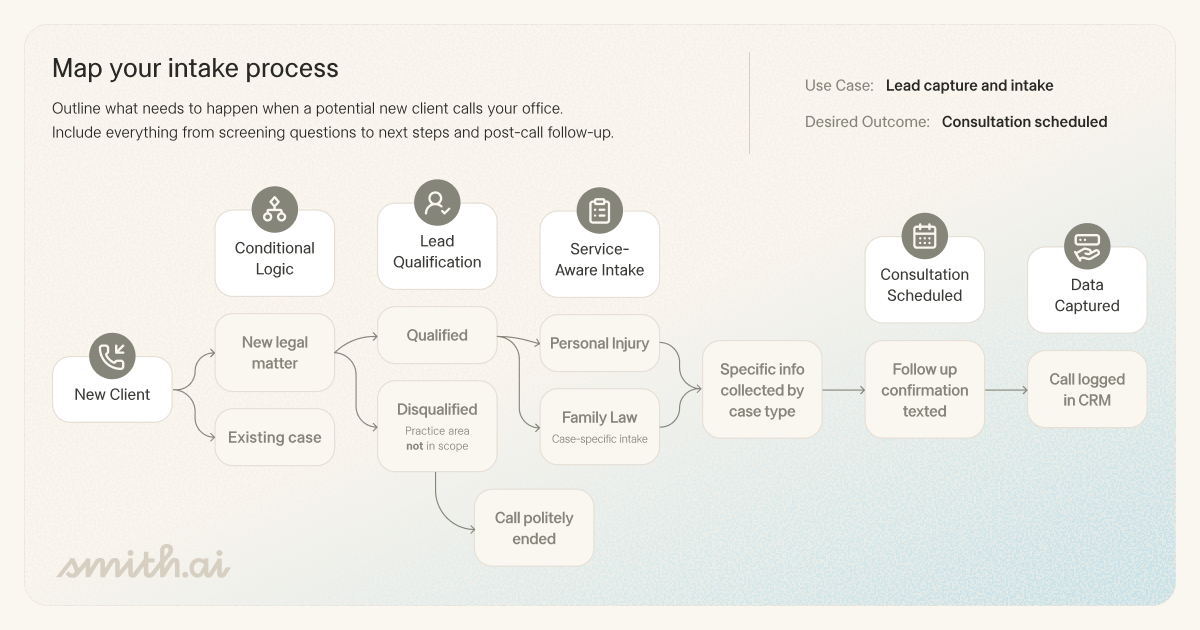

Your intake process — Document screening questions and qualification thresholds by practice area (e.g., personal injury case minimums, jurisdiction checks, conflict screening). This becomes the configuration blueprint for your intake call flows.

Your escalation path — Identify high-stakes scenarios: distressed callers, urgent client issues, opposing counsel. Define how these are handled today. This is often overlooked — and critical when evaluating vendors.

Bring this system map into every vendor conversation. It shifts the discussion from a generic demo to a focused evaluation of fit.

The evaluation framework

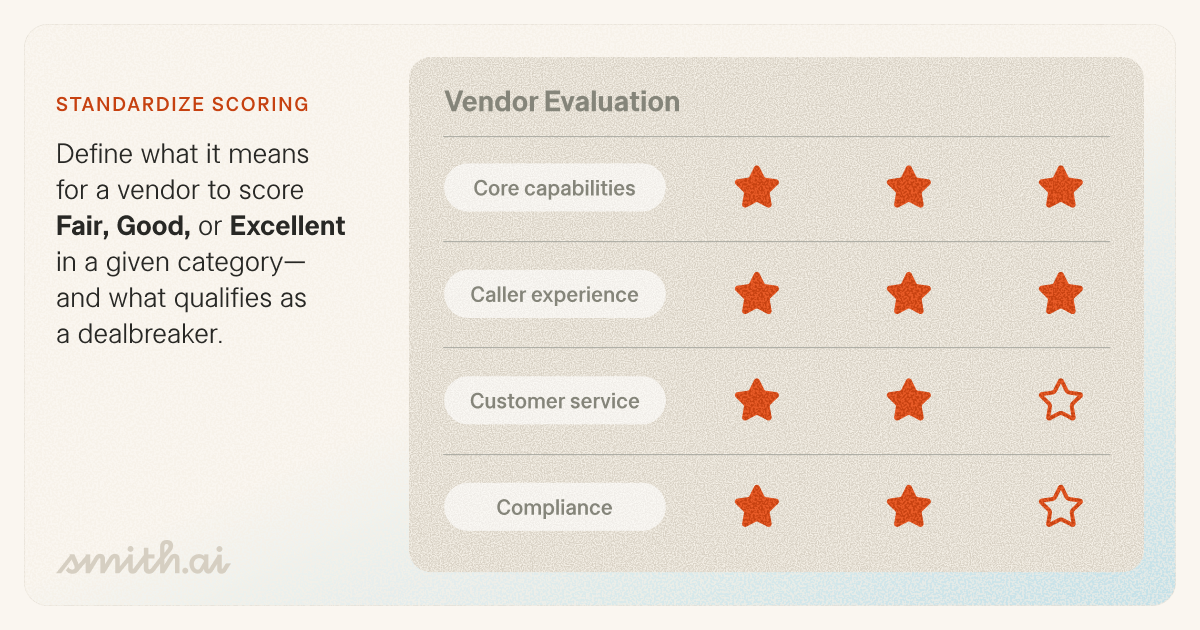

A comprehensive evaluation covers the four Cs: core capabilities, caller experience, customer service, and compliance. Considering criteria across all four will help you avoid the worst of the iceberg problem.

Core capabilities

Your jobs to be done define your capabilities criteria. Identify your top priorities and clearly separate dealbreakers from acceptable trade-offs.

Focus on whether the system can reliably execute your highest-value workflows across different scenarios, not just in ideal conditions.

Key areas to assess:

- Conditional logic: Ability to adapt intake flows by practice area, caller type, and responses

- Scenario handling: Performance across your most common and highest-stakes call types

- Routing accuracy: Consistency in directing callers to the correct outcome

- System adaptability: Speed and ease of updating workflows as your requirements evolve

Example priorities:

- Qualify and screen new leads consistently [dealbreaker]

- Maintain professionalism that reflects your brand [dealbreaker]

- Route every caller to the right outcome

- Sync caller data to your systems without manual re-entry

- Escalate to a human when the situation requires it

Caller experience

Your callers are often in high-stress situations. The quality of their first interaction directly influences trust, conversion, and client satisfaction.

Evaluate how well the system handles real-world variability, not just scripted flows.

Key areas to assess:

- Tone and customization: Alignment with your firm’s brand and communication style

- Adaptability: Ability to navigate unscripted, complex, or ambiguous conversations

- Failure handling: What happens when the AI reaches its limits

- Escalation quality: When and how calls transition to a human, and what context is passed

Hybrid models — combining AI with trained live agents — typically perform better in scenarios requiring empathy, judgment, or nuance.

Customer service

The vendor’s service model is a critical component of long-term success. AI receptionist systems require ongoing iteration as your firm evolves.

Evaluate the vendor’s ability to support both initial setup and continuous optimization.

Key areas to assess:

- Ownership model: Who is responsible for building and maintaining workflows

- Implementation process: Structure, timeline, and presence of a testing phase

- Support maturity: Size, structure, and specialization of support and customer success teams

- Responsiveness: How issues are surfaced, communicated, and resolved

- Self-service vs. dependency: Whether your team can make updates independently

A vendor’s operational maturity often determines whether the system improves over time or stagnates.

Compliance

For law firms, compliance is inseparable from product evaluation. It directly affects your obligations around confidentiality, privilege, and client data protection.

Evaluate both technical safeguards and business stability.

Key areas to assess:

- Data ownership and storage: Where data resides and who controls it

- Security practices: Encryption, access controls, and auditability

- Privilege handling: Treatment of sensitive and legally protected information

- Data lifecycle: Retention, deletion, and portability policies

- Contract structure: Billing model, term length, and exit conditions

Vendor stability is part of compliance. Sensitive client data requires a partner with long-term viability.

What to look for in a demo

Demos are curated. Your job is to push past the script.

Ask them to walk through your scenario, not theirs — e.g., a potential PI client after hours, an existing client asking for a case update, opposing counsel requesting a callback. Ask what happens when the caller goes off-script.

Watch how they handle hard questions. What happens when the LPMS integration breaks or what the AI does with a caller it can’t understand. The best vendors welcome tough questions. Defensiveness tells you something.

Evaluate the vendor's process, not just their product. Do they ask about your practice areas, intake criteria, and conflict-check process? Or is it mostly them doing the talking? A vendor who wants to learn your firm is invested in your success.

The quality of a vendor’s discovery process predicts the quality of the system they’ll build.

Questions to ask every vendor

Keep this list consistent across conversations so you can compare answers side by side.

Core capabilities

- How does the system execute our specific jobs to be done across different call scenarios?

- How does intake logic adapt across practice areas, caller types, and responses?

- How are routing decisions made, and how consistent are they?

- How are workflow updates handled when our requirements change?

Caller experience

- How does the system handle emotionally distressed or sensitive callers?

- What types of calls can the AI not handle effectively?

- What happens when the system reaches its limits?

- When and how are calls escalated to a human, and what context is passed?

- If live agents are involved, how are they trained and integrated into the experience?

Customer service

- Who is responsible for building and maintaining call flows?

- What does the implementation process look like, including testing?

- How are updates, optimizations, and changes managed over time?

- How are performance issues identified, communicated, and resolved?

- What level of access does our team have to make changes independently?

- What does ongoing support look like (dedicated contact vs shared queue)?

Compliance

- Where is call data stored, and who owns it?

- What are the data retention and deletion policies?

- How is data secured (in transit and at rest), and who controls access?

- How is privileged or sensitive information handled during intake?

- What happens to our data if we cancel?

- What are the contract terms, billing model, and cancellation policy?

Define your standards

The most effective way to compare vendors is with a standardized scoring model. Use the same criteria and scale for each vendor, and include dealbreaker thresholds so critical failures override total score. At a minimum, score every vendor from 0 (dealbreaker) to 4 (excellent) across all 4 Cs: core capabilities, caller experience, customer service, and compliance.

When you tally the scores, you’re not looking for the highest number. You’re looking for the best fit with no unacceptable gaps.

Coming soon: A downloadable scoring template you can use in every vendor conversation.

Next up: Choosing your vendor

In Part IV, we'll cover how to use your evaluation results to make a confident decision, including how to define “good enough,” avoid over-optimization, and move forward without second-guessing.

Stay tuned for The Complete Guide to AI Receptionists, Part IV.

Want one-on-one help mapping your requirements to the right solution? Book a free consultation with a product expert.

Key Areas to Explore

Your submission has been received!